This is the last post in the series of articles on real-life facts you need to know for GMAT Critical Reasoning.

Here’s the full list:

- Economics: Supply and Demand

- Economics: Labor and Wages

- Economics: Inflation, unemployment, and interest rates

- Law: “beyond any reasonable doubt”

- Statistics: Statistical significance

Statistical significance

Most research in the natural and social sciences involves statistics. Researchers are looking at a large number of cases, and determining patterns of association. The question often arises: is a particular pattern the result of chance, or does it result from a meaningful connection to the reputed cause?

Let’s think about a concrete hypothetical example. Suppose there’s some horrible disease: Mongolian Bagpipe Fever (MBF). For simplicity, suppose there are just two extreme outcomes: either people die of this disease, or they recover completely. Suppose I have a new medicine that I think will help people with MBF, and we test it against a placebo (a standard design in medical tests.) Now, consider the following results.

Example #1 is an entirely unrealistic scenario — a case of completely unambiguous certainty: everyone who gets the medicine survives, and everyone who doesn’t get the medicine dies. In real research, nothing is ever this unambiguously clear: that’s why this is unrealistic.

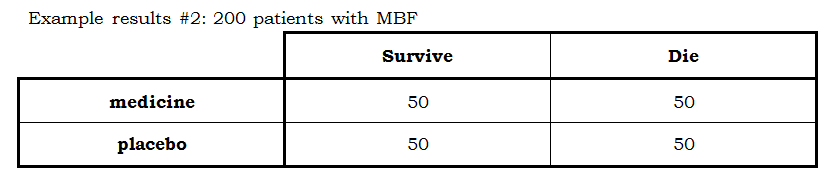

Example #2 is also unambiguously clear: folks survive the disease equally well, regardless of whether they are getting the medicine or the placebo. This clearly suggests: the medicine is no better than a sugar pill — in other words, the new medicine has, in fact, no medicinal value. Again, real data is never this crystal clear, so this is also unrealistic.

Real cases, cases involving real data, are always somewhere between those two scenarios. Consider these two:

These are more representative of real data, insofar as there’s ambiguity and uncertainty. In each case, the survival rate for folks not getting the medicine is 50%. In each case, the survival rate of folks receiving the medicine is at least somewhat higher the 50%. The question is: is this slightly high rate actually due to the efficacy of the medicine, or is it just a chance fluctuation in the data?

Statisticians evaluate these questions by calculating probabilities: how likely each scenario is to arise from chance fluctuations? For the GMAT, you do not need to know anything about the details of that probability calculation. As it happens, in the example #3 chart, results this pronounced or more would result about 39% of the time by chance — they are relatively likely to occur by chance. By contrast, in the example #4 chart, results this pronounced or more would result about 1.6% of the time by chance — they are comparatively unlikely to occur by chance. Another way to say this is: the results in example #4 are statistically significant.

In the case of example #3, the explanation that the results arose by chance is quite cogent: since those results are likely to arise by chance, it’s not surprising that in a chance scenario they would arise. In the case of example #4, the explanation that the results arose by chance is not particularly persuasive. Saying that these results are statistically significant means they are not likely to result from chance, which implies that their explanation as a product of chance is not compelling, which in turn requires us to look elsewhere, for something other than chance, to explain the difference. In this example #4, the fact that the results are not adequately explained by chance means that the only plausible explanation for these results is that medicine actually worked. The results in #4 constitute evidence that the medicine worked, whereas the results in #3 do not. In scientifically designed studies, to say results are statistically significant implies that we have found persuasive evidence for factor we were testing.

All scientific studies involving a large pool of data will involve chance fluctuations. Whenever you are trying to test or measure a certain effect, the first explanation you always have to rule out is that of chance itself — that the results are no more than the product of chance. To say the results are statistically significant is to say (a) results are not likely to arise by chance; (b) therefore, explaining the results as a product of chance fluctuations is untenable; (c) therefore, we have compelling evidence for whatever factor we were testing. Any time the word “significant” or “significantly” is used in the context of a research study (“significantly increased”, “significantly reduced”, etc.), it directly implies this entire nexus of ideas. In fact, this set of logical relationships underlies the vast majority of studies in the natural and social sciences.

Leave a Reply